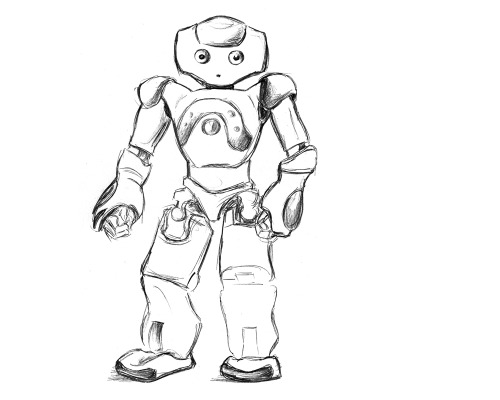

In Kognit (2014–2015), we enter the mixed reality realm for helping dementia patients. Dementia is a general term for a decline in mental ability severe enough to interfere with daily life. Memory loss is an example. Alzheimer’s is the most common type of dementia. Mixed reality refers to the merging of real and virtual worlds to produce new episodic memory visualisations where physical and digital objects co-exist and interact in real-time. Cognitive models are approximations of a patient’s mental abilities and limitations involving conscious mental activities (such as thinking, understanding, learning, and remembering). We are concerned with fundamental research in coupling artificial intelligence based situation awareness with augmented cognition for the patient.

Kognit is a BMBF fundamental research pre-project based on ERmed and RadSpeech.

Focus on body sensor interpretation, activity recognition, and pro-active episodic memory aid:

Cognitive Model

- Artificial Intelligence learning system for activity recognition

- Modelling of the situation

- Modelling of the cognitive impairments

- Modelling of the augmented cognition

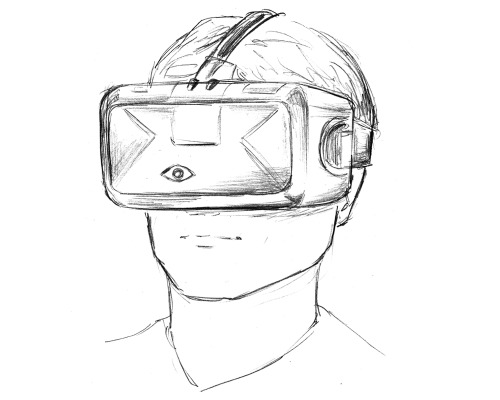

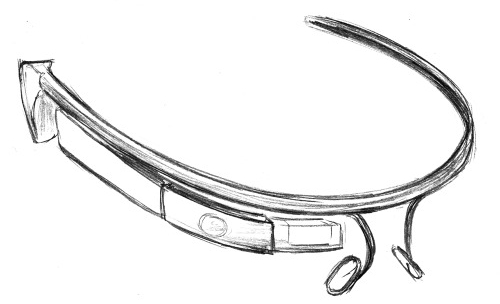

Mixed Reality

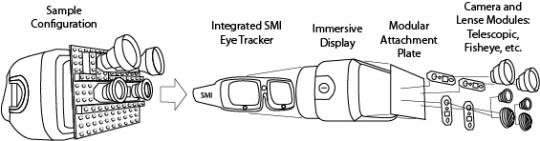

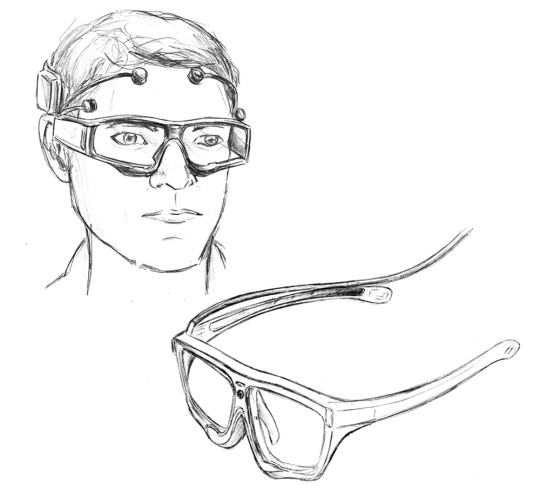

- Eye tracker and video camera realtime input

- Head-mounted display output

- Real-time registration into the field of view

In order to provide a new foundation for managing dynamic content and improve the usability of optical see-through HMD and mobile eye tracker systems, we implement a salience-based activity recognition system and combine it with an intelligent multimedia text and image multimodal display management system.